|

PhD researcher in 3D Computer Vision and Machine Learning with background in Gaussian Splatting, 3D reconstruction, depth estimation, SLAM and generative AI. Experienced across top research labs (NVIDIA, Disney, Meta, Huawei, ETH CVG) driving novel methods in robotics, AR/VR, and medical imaging. Completed PhD under the guidance of Prof. Javier Civera at the Robotics Lab in the Universidad de Zaragoza in December 2025. I did a research stay at Computer Vision and Geometry Group (CVG) directed by Prof. Marc Pollefeys in ETH Zurich, Switzerland, supervised by Martin. R. Oswald from September 2021 to March 2022. I interned as Research Scientist at Huawei Research Center (Jan–Jul 2024), Meta Reality Labs (Jul 2024–Mar 2025) and Disney Research (Mar–Jun 2025), all in Zurich, Switzerland. Currently, I am a Robotics Software intern at NVIDIA in Zurich. Email / LinkedIn / Google Scholar / GitHub |

|

|

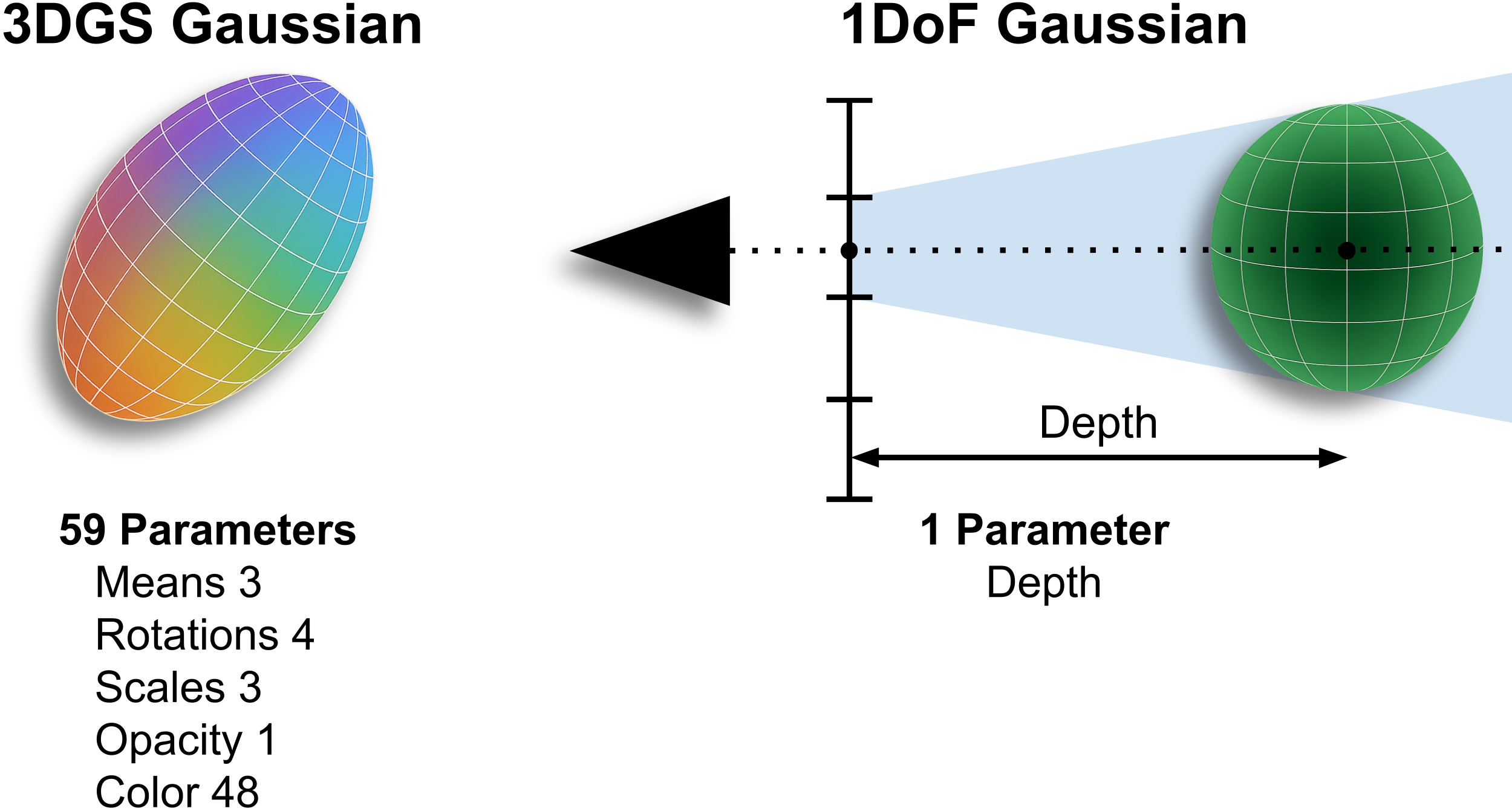

David Recasens, Robert Maier, Aljaz Bozic, Stephane Grabli, Javier Civera, Tony Tung, Edmond Boyer CVPR 2026 3DMV Project page / Paper / Code PAGaS refines per-view depth maps using pixel-aligned Gaussians that carry a single degree of freedom along their viewing ray, uses only photometric constraints from a few adjacent views and captures unprecedented pixel-level detail in both object-centric and large-scale scenes. |

|

David Recasens, Martin R. Oswald, Marc Pollefeys, Javier Civera NeurIPS, 2023 Project page / arXiv paper / NeurIPS paper / Poster / Video / Code The Drunkard’s Dataset, a challenging collection of synthetic data targeting visual navigation and reconstruction in deformable environments. And the Drunkard’s Odometry, a novel monocular RGB-D deformable odometry method that breaks down optical flow estimate into rigid-body camera motion and non-rigid scene deformation. |

|

Javier Rodríguez-Puigvert, David Recasens, Javier Civera, Rubén Martínez-Cantín MICCAI, 2022 Project page / MICCAI2022 paper / arXiv paper / Video demo Deepening for the first time in Bayesian deep networks for single-view depth estimation in colonoscopies. |

|

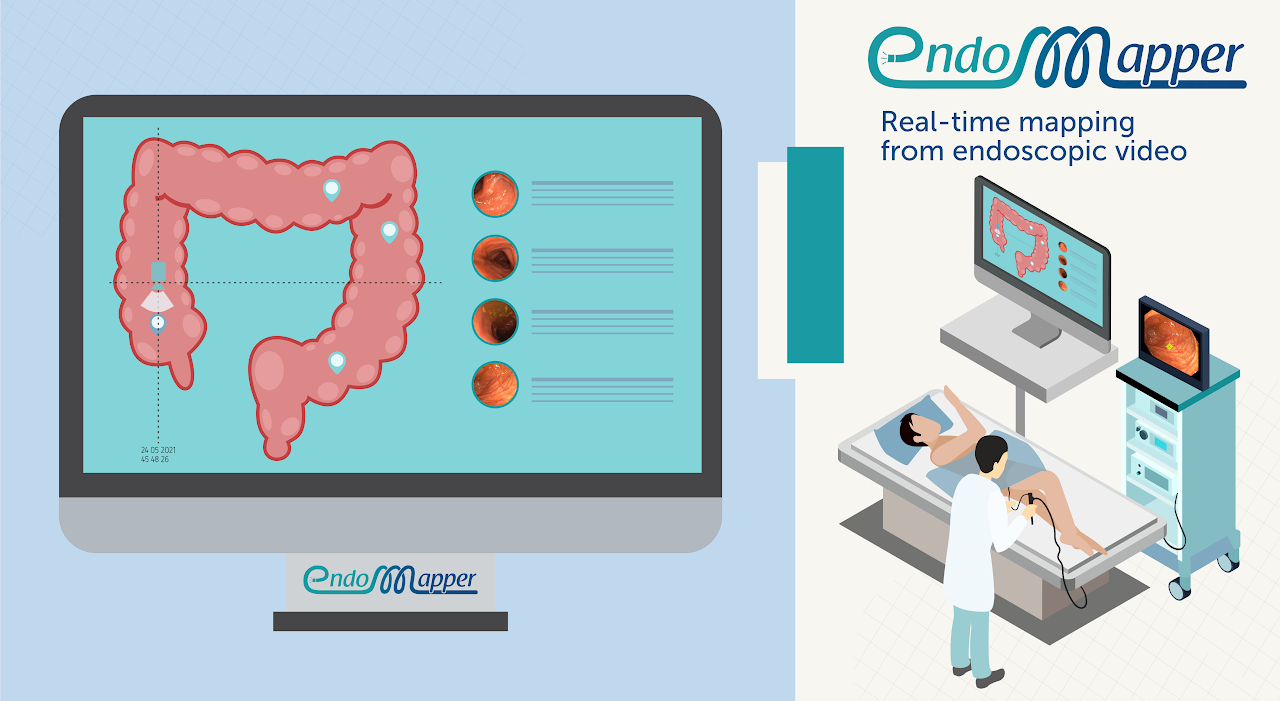

David Recasens, José Lamarca, José M. Fácil, José María M. Montiel, Javier Civera RA-L and IROS, 2021 Project page / RA-L paper / arXiv paper / Video demo / Code A pipeline that estimates the 6-degrees-of-freedom camera pose and dense 3D scene models from monocular endoscopic videos. |

|

Pablo Azagra, Carlos Sostres, Ángel Ferrández, David Recasens, et al. Scientific Data (Nature), 2023 Project page / Paper First collection of complete endoscopy sequences acquired during regular medical practice to support the development visual SLAM methods. |

|

|